Use Kerberos with Spark Submit

Learn how to execute Spark Submit jobs on secure Cloudera Hadoop clusters.

This article explains how to execute Spark Submit jobs on secure Cloudera Hadoop clusters version 5.7 and later using Kerberos authentication. Spark jobs can be submitted to the secure clusters by adding keytab and principal utility parameter values to the job. These values are what enable Kerberos authentication for Spark.

Prerequisites

The following prerequisites must be completed before running the Spark jobs:

- A Spark client must be installed, Refer to the article Spark Submit for information on installing and configuring the Spark client.

- The cluster must be secured with Kerberos, and the Kerberos server used by the cluster must be accessible to the Pentaho Server.

- The Pentaho computer must have Kerberos installed and configured as explained in Set Up Kerberos for Pentaho.

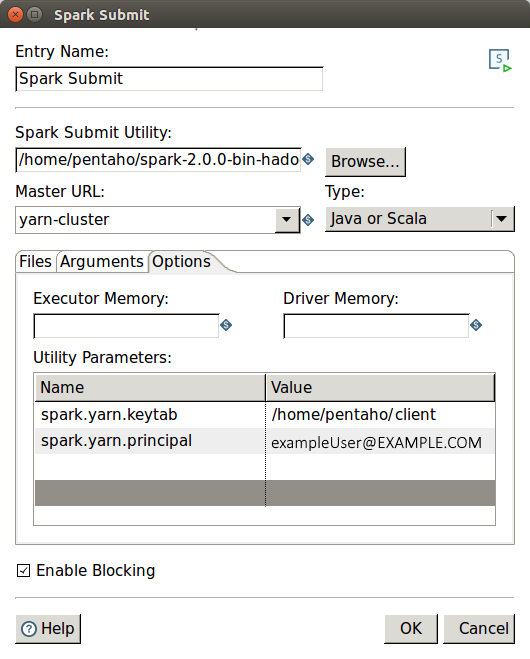

Spark Submit entry properties

Configure your job setup with the parameters in the following table:

| Parameter | Value |

| Entry Name | Name of the entry. You can customize this, or leave it as the default. |

| Spark Submit Utility | Script that launches the Spark job. |

| Master URL | The master URL for the cluster. Two options are supported:

|

| Type | The file type of your Spark job to be submitted. Your job can be written in Java, Scala, or Python. The fields displayed in the Files tab will depend on what language option you select. |

| Utility Parameters |

Name and Value of optional Spark configuration parameters associated with the spark-defaults.conf file. Add the following name and value pairs:

|

| Enable Blocking | This option is enabled by default. If this option is selected, the job entry waits until the Spark job finishes running. If it is not, the job entry proceeds with its execution once the Spark job is submitted for execution. |

Authentication via password is not supported.