Using the Text File Input step on the Spark engine

You can set up the Text file input step to run on the Spark engine. Spark processes null values differently than the Pentaho engine, so you may need to adjust your transformation to process null values following Spark's processing rules.

If you are running your transformation on the Spark engine, use the following instructions to set up the Text File Input step.

General

Enter the following information in the transformation step name field:

- Step name: Specify the unique name of the Text file input step on the canvas. You can customize the name or leave it as the default.

You can use Preview rows to display the rows generated by this step. The Text file input step determines what rows to input based on the information you provide in the option tabs. This preview function helps you to decide if the information provided accurately models the rows you are trying to retrieve.

Options

The Text file input step features several tabs with fields. Each tab is described below.

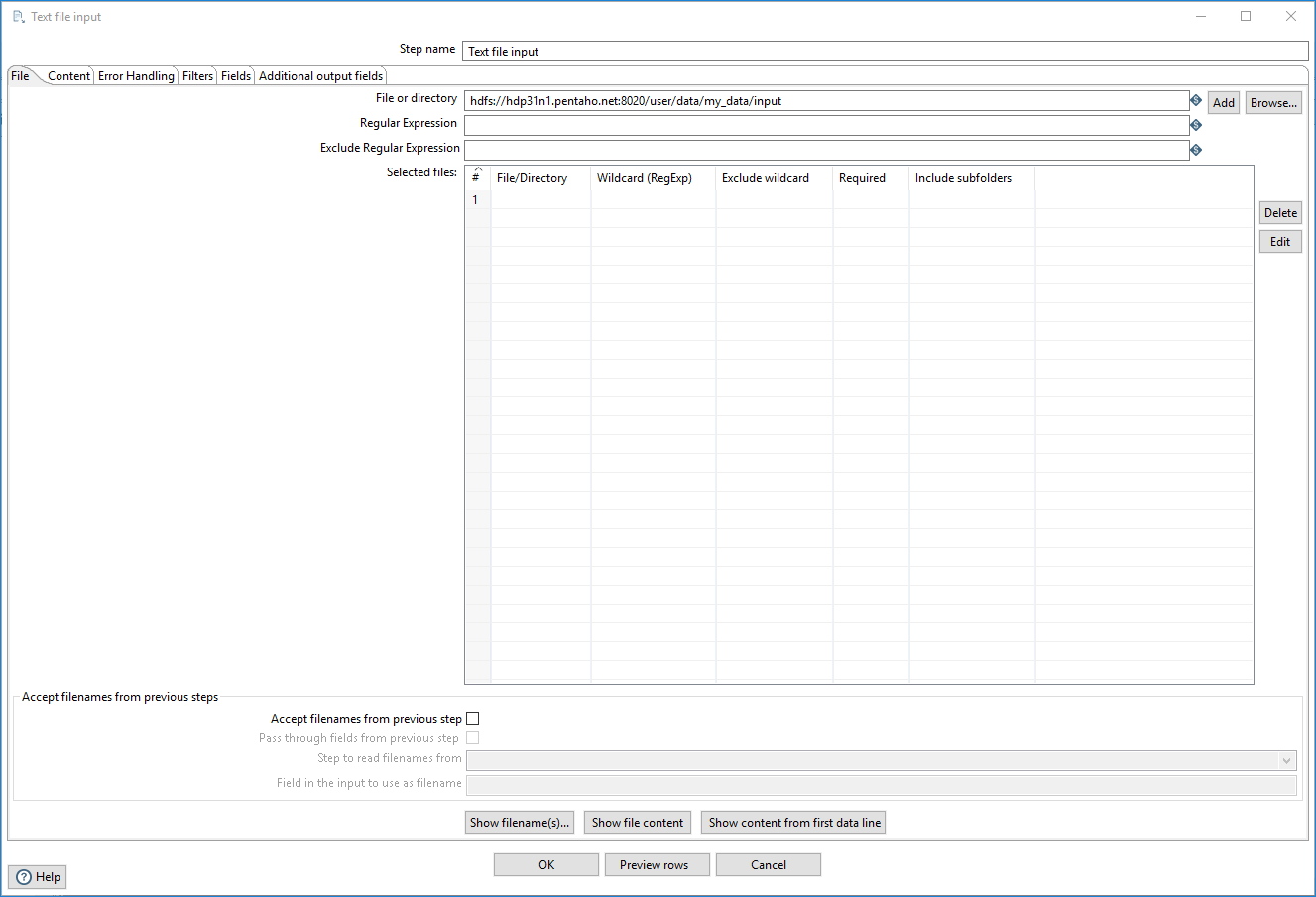

File tab

Use the File tab to enter the following connection information for your source.

| Option | Description |

| File or directory | Specify the source location if the source is not defined in a

field. Click Browse to display the Open File window and navigate to the file or folder. For the supported file system types, see Connecting to Virtual File Systems. Click Add to include the source in the Selected files table. If the source location is defined in a field, use the Accept filenames from previous steps to specify your file name. |

| Regular expression | Specify a regular expression to match filenames within a specified directory. |

| Exclude regular expression | Specify a regular expression to exclude filenames within a specified directory. |

Regular expressions

Use the Wildcard (RegExp) field in the File tab to search for files by wildcard in the form of a regular expression. Regular expressions are more sophisticated than using * and ? wildcards. This table describes several examples of regular expressions.

| File Name | Regular Expression | Files Selected |

| /dirA/ | .userdata.\.txt | Find all files in /dirA/ with names containing userdata and ending with .txt |

| /dirB/ | AAA.\* | Find all files in /dirB/ with names that start with AAA |

| /dirC/ | \[ENG:A-Z\]\[ENG:0-9\].\* | Find all files in /dirC/ with names that start with a capital and followed by a digit (A0-Z9) |

Selected files table

The Selected files table shows files or directories to use as source locations for input. This table is populated by clicking Add after you specify a File or directory. The input step tries to connect to the specified file or directory when you click Add to include it in the table.

The table contains the following columns:

| Column | Description |

| File/Directory | The source location indicated by clicking Add after specifying it in File or directory. |

| Wildcard (RegExp) | Specify a regular expression to match filenames within a specified directory. |

| Exclude wildcard | Specify a regular expression to exclude filenames within a specified directory. |

| Required | Required source location for input. |

| Include subfolders | Whether subfolders are included within the source location. |

Click Delete to remove a source from the table. Click Edit to remove a source from the table and return it back to the File or directory option.

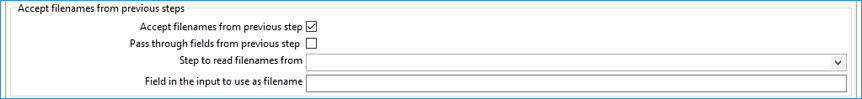

Accept file names

These fields are not used by the Spark engine.

Show action buttons

When you have entered information in the File tab fields, select an action button if you want to look at the source file names or data content.

| Button | Description |

| Show filename(s) | Select to display the file names of the sources connected to the step. |

| Show file content | Select to display the raw content of the selected file. |

| Show content from first data line | Select to display the content from the first data line for the selected file. |

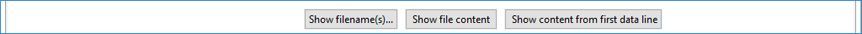

Content tab

In the Content tab, using the following options, you can specify the format of the source files.

| Option | Description |

| Filetype | Select either CSV or Fixed length. Depending on the file type you select, a corresponding interface appears when you click Get Fields in the Fields tab. |

| Separator | Specify the character used to separate the fields in a single line of text, typically a semicolon or tab. Click Insert Tab to place a tab in the Separator field. The default value is semicolon (;). |

| Enclosure | Specify an optional character used to enclose a field if that field contains a separator character. The default value is double quotation marks ("). |

| Allow breaks in enclosed fields | This field is either not used by the Spark engine or not implemented for Spark on AEL. |

| Escape | Specify one or more characters to indicate if another character is a part of a regular text. For example, if a backslash (\) is the escape character and a single quote (') is an enclosure or separator character, then the text Not the nine o\’clock news is parsed as Not the nine o’clock news. |

| Header | Select if your text file has a header row (first lines in the

file). Set Header to 1 (one). |

| Footer | This field is either not used by the Spark engine or not implemented for Spark on AEL. |

| Wrapped lines | This field is either not used by the Spark engine or not implemented for Spark on AEL. |

| Paged layout (printout) | This field is either not used by the Spark engine or not implemented for Spark on AEL. |

| Compression | This field is either not used by the Spark engine or not implemented for Spark on AEL. |

| No empty rows | This field is either not used by the Spark engine or not implemented for Spark on AEL. |

| Include filename in output | This field is either not used by the Spark engine or not implemented for Spark on AEL. |

| Rownum in output | This field is either not used by the Spark engine or not implemented for Spark on AEL. |

| Format | Select UNIX. |

| Encoding | This field is either not used by the Spark engine or not implemented for Spark on AEL. |

| Length | This field is either not used by the Spark engine or not implemented for Spark on AEL. |

| Limit | This field is either not used by the Spark engine or not implemented for Spark on AEL. |

| Be lenient when parsing dates? | This field is either not used by the Spark engine or not implemented for Spark on AEL. |

| The date format Locale | This field is either not used by the Spark engine or not implemented for Spark on AEL. |

| Add filenames to result | This field is either not used by the Spark engine or not implemented for Spark on AEL. |

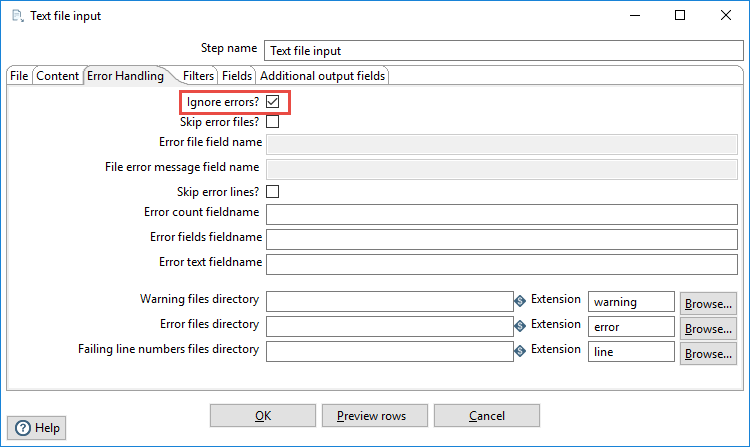

Error Handling tab

For the Text file input step to work in the Spark environment, you must select the Ignore errors field. The other fields are ignored by the Spark engine, so these fields can remain empty.

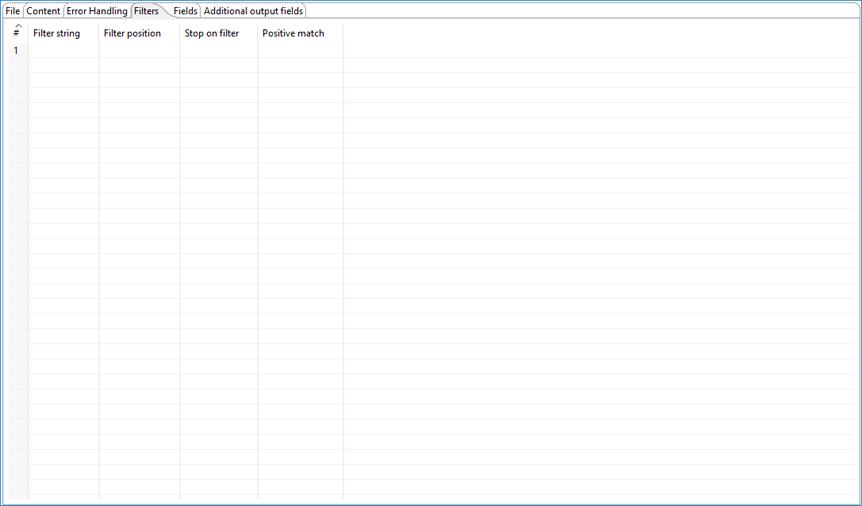

Filters tab

In the Filters tab, you can specify the lines you want to skip in the text file.

| Column | Description |

| Filter string | The string for which to search. |

| Filter position | The position where the filter string must be placed in the line. Zero (0) is the first position in the line. If you specify a value below zero, the filter string is searched for in the entire string. |

| Stop on filter | Enter Y here if you want to stop processing the current text file when the filter string is encountered. |

| Positive match | Turns filters into positive mode when turned on. Only lines that match this filter will be passed. Negative filters take precedence and are immediately discarded. |

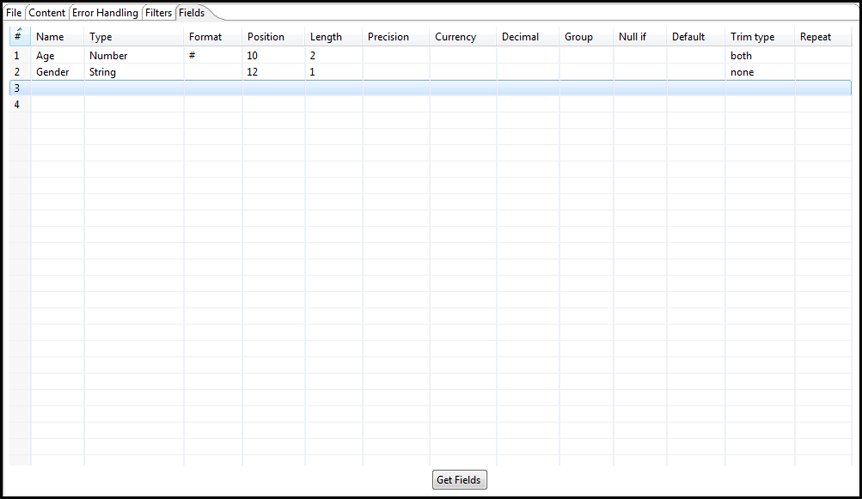

Fields tab

In the Fields tab, you can specify the information about the name and format of the fields being read from the text file.

| Option | Description |

| Name | Name of the field. |

| Type | Type of the field can be either String, Date, or Number. |

| Format | See Number formats for a complete description of format symbols. |

| Position | The position is needed when processing the Fixed filetype. It is zero-based, so the first character is starting with position 0. |

| Length |

The value of this field depends on format:

|

| Precision |

The value of this field depends on format:

|

| Currency | Used to interpret numbers such as $10,000.00 or E5.000,00. |

| Decimal | A decimal point can be a period (.) as in 10,000.00 or it can be a comma (,) as in 5.000,00. |

| Group | A grouping can be a comma (,) as in 10,000.00 or a period (.) as in 5.000,00. |

| Null if | Treat this value as null. |

| Default | Default value in case the field in the text file was not specified (empty). |

| Trim type |

Trim the type before processing. You can specify one of the following options:

|

| Repeat | If the corresponding value in this row is empty, repeat the one from the last time it was not empty (Y or N). |

Number formats

Use the following table to specify number formats. For further information on valid numeric formats used in this step, view the Number Formatting Table.

| Symbol | Location | Localized | Meaning |

| 0 | Number | Yes | Digit. |

| # | Number | Yes | Digit, zero shows as absent. |

| . | Number | Yes | Decimal separator or monetary decimal separator. |

| - | Number | Yes | Minus sign. |

| , | Number | Yes | Grouping separator. |

| E | Number | Yes | Separates mantissa and exponent in scientific notation. Need not be quoted in prefix or suffix. |

| ; | Subpattern boundary | Yes | Separates positive and negative patterns. |

| % | Prefix or suffix | Yes | Multiply by 100 and show as percentage. |

| ‰(/u2030) | Prefix or suffix | Yes | Multiply by 1000 and show as per mille. |

| ¤ (/u00A4) | Prefix or suffix | No | Currency sign, replaced by currency symbol. If doubled, replaced by international currency symbol. If present in a pattern, the monetary decimal separator is used instead of the decimal separator. |

| ‘ | Prefix or suffix | No | Used to quote special characters in a prefix or suffix, for example, '#'# formats 123 to #123. To create a single quote itself, use two in a row: # o''clock. |

Scientific notation

In a pattern, the exponent character immediately followed by one or more digit characters indicates scientific notation, for example, 0.###E0 formats the number 1234 as 1.234E3.

Date formats

Use the following table to specify date formats. For further information on valid date formats used in this step, view the Date Formatting Table.

| Letter | Date of Time Component | Presentation | Examples |

| G | Era designator | Text | AD |

| y | Year | Year | 1996 or 96 |

| M | Month in year | Month | July, Jul, or 07 |

| w | Week in year | Number | 27 |

| W | Week in Month | Number | 2 |

| D | Day in year | Number | 189 |

| d | Day in month | Number | 10 |

| F | Day of week in month | Number | 2 |

| E | Day in week | Text | Tuesday or Tue |

| a | am/pm marker | Text | PM |

| H | Hour in day (0-23) | Number 0 | n/a |

| k | Hour in day (1-24) | Number 24 | n/a |

| K | Hour in am/pm (0-11) | Number 0 | n/a |

| h | Hour in am/pm (1-12) | Number 12 | n/a |

| m | Minute in hour | Number 30 | n/a |

| s | Second in minute | Number 55 | n/a |

| S | Millisecond | Number 978 | n/a |

| z | Time zone | General time zone | Pacific Standard Time, PST, or GMT-08:00 |

| Z | Time zone | RFC 822 time zone | -0800 |

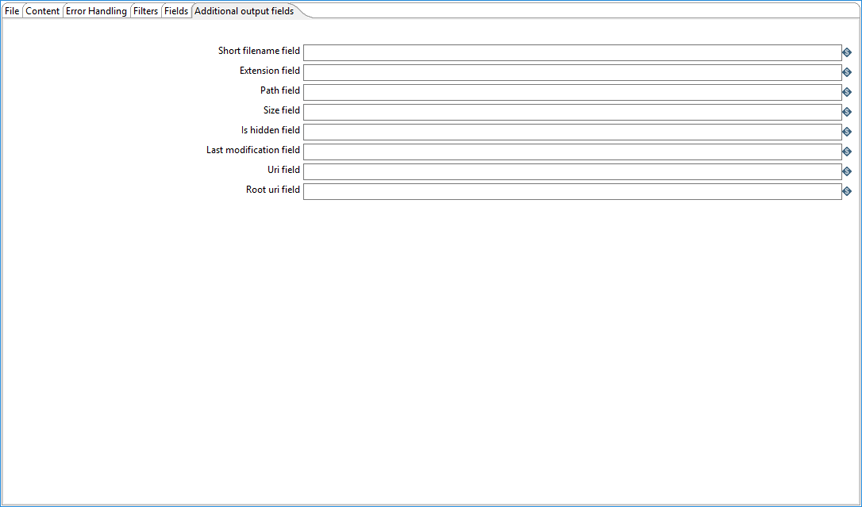

Additional output fields tab

The Additional output fields tab contains the following options to specify additional information about the file to process.

| Option | Description |

| Short filename field | Specify the field that contains the filename without path information but with an extension. |

| Extension field | Specify the field that contains the extension of the filename. |

| Path field | Specify the field that contains the path in operating system format. |

| Size field | Specify the field that contains the size of the data. |

| Is hidden field | Specify the field indicating if the file is hidden or not (Boolean). |

| Last modification field | Specify the field indicating the date of the last time the file was modified. |

| Uri field | Specify the field that contains the URI. If you are using this step to extract data from Amazon Simple Storage Service (S3), browse to the URI of the S3 system or use this option. S3 and S3n are supported. |

| Root uri field | Specify the URI output field name. |

Metadata injection support

All fields of this step support metadata injection. You can use this step with ETL metadata injection to pass metadata to your transformation at runtime.