Spark Submit

You can use the Spark Submit job entry in PDI to launch Spark jobs on any vendor version that PDI supports.

Using Spark Submit, you can submit Spark applications, which you have written in either Java, Scala, or Python to run Spark jobs in YARN-cluster or YARN-client mode. See Using Spark Submit for more information.

Before you begin

Before you install Spark, we strongly recommend that you review the following Spark documentation, release notes, and known issues first:

- https://spark.apache.org/releases/

- https://spark.apache.org/docs/latest/configuration.html

- https://spark.apache.org/docs/latest/running-on-yarn.html

- Spark installation and configuration documentation

You may also want to reference the https://spark.apache.org/docs/2.3.2/submitting-applications.html instructions about how to submit jobs for Spark.

Install and configure Spark client for PDI use

You will need to install and configure the Spark client on every machine where you want to run Spark jobs using PDI. The configuration of the client depends on your version of Spark. Pentaho supports Cloudera Distribution of Spark (CDS) versions 2.3.x and 2.4.x.

Spark version 2.x.x

Procedure

On the client, download the Spark distribution of the same or higher version as the one used on the cluster.

Set the HADOOP_CONF_DIR environment variable to a folder containing cluster configuration files as shown in the following sample for an already-configured driver:

<username>/.pentaho/metastore/pentaho/NamedCluster/Configs/<user-defined connection name>

Navigate to <SPARK_HOME>/conf and create the spark-defaults.conf file using the instructions outlined in https://spark.apache.org/docs/latest/configuration.html.

Create a ZIP archive containing all the JAR files in the SPARK_HOME/jars directory.

Copy the ZIP file from the local file system to a world-readable location on the cluster.

Edit the spark-defaults.conf file to set the spark.yarn.archive property to the world-readable location of your ZIP file on the cluster as shown in the following examples:

spark.yarn.archive hdfs://NameNode hostname:8020/user/spark/lib/your ZIP file

Add the following line of code to the spark-defaults.conf file:

spark.hadoop.yarn.timeline-service.enabled false

If you are connecting to an HDP cluster, add the following lines in the spark-defaults.conf file:

spark.driver.extraJavaOptions -Dhdp.version=2.3.0.0-2557spark.yarn.am.extraJavaOptions -Dhdp.version=2.3.0.0-2557

NoteThe -Dhdp version should be the same as Hadoop version used on the cluster.If you are connecting to an HDP cluster, also create a text file named java-opts in the <SPARK_HOME>/conf folder and add your HDP version to it as shown in the following example:

-Dhdp.version=2.3.0.0-2557

NoteRun the hdp-select status Hadoop client command to determine your version of HDP.If you are connecting to a supported version of the HDP or CDH cluster, open the core-site.xml file, then comment out the net.topology.script.file property as shown in the following code block:

<!-- <property> <name>net.topology.script.file.name</name> <value>/etc/hadoop/conf/topology_script.py</value> </property> -->

Results

General

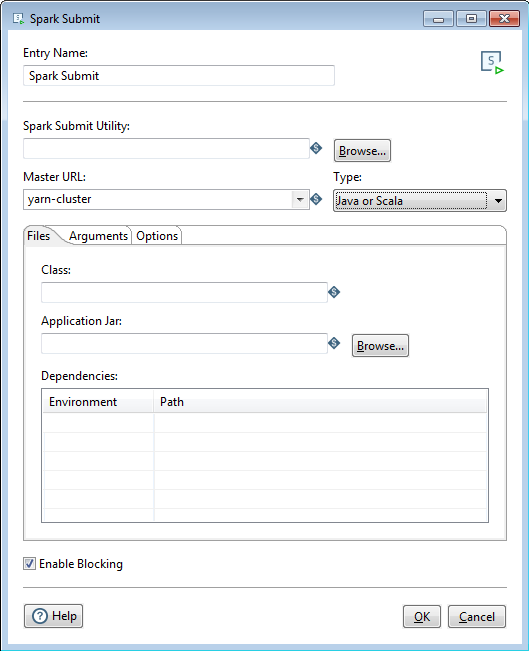

Set up information for the Spark Submit job entry is detailed below. For additional information about configuring the Spark-submit utility tools, see the Cloudera documentation.

The following table describes the fields for setting up your Spark job:

| Field | Description |

| Entry Name | Specify the name of the entry. You can customize it or leave it as the default. |

| Spark Submit Utility | Specify the name of the script that launches the Spark job, which

is the batch/shell file name of the underlying spark-submit tool. For example,

Spark2-submit. |

| Master URL | Choose a master URL for the cluster from the drop-down:

|

| Type |

Select the file type of the Spark job you want to submit. Your job can be written in Java, Scala, or Python. The fields displayed in the Files tab will depend on what language option you select. Python support on Windows requires Spark version 2.3.x or higher. |

| Enable Blocking | Select Enable Blocking to have the Spark Submit entry wait until the Spark job finishes running. If this option is not selected, the Spark Submit entry proceeds with its execution once the Spark job is submitted for execution. Blocking is enabled by default. |

We support the yarn-cluster and yarn-client modes. For descriptions of the modes, see the Spark documentation.

Options

The Spark Submit entry features several tabs with fields. Each tab is described below.

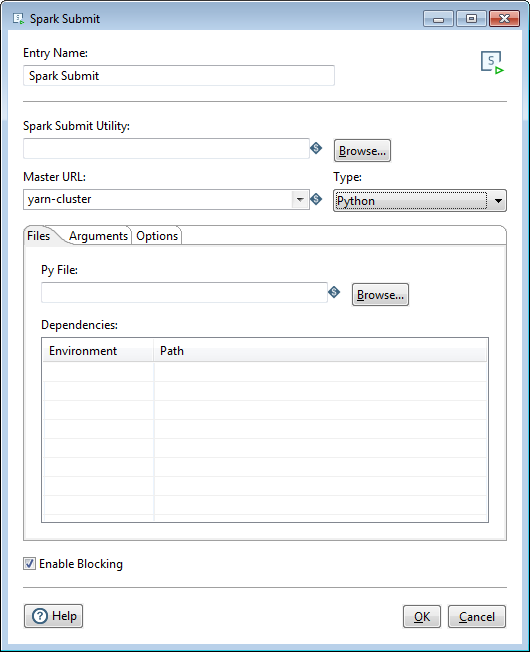

Files tab

The fields of this tab depend on whether you set the Spark job Type to Java or Scala or Python.

Java or Scala

If you select Java or Scala as the file Type, the Files tab will contain the following options:

| Option | Description |

| Class | Optionally, specify the entry point for your application. |

| Application Jar | Specify the main file of the Spark job you are submitting. It is a path to a bundled jar including your application and all dependencies. The URL must be globally visible inside of your cluster, for instance, an hdfs:// path or a file:// path that is present on all nodes. |

| Dependencies | Specify the Environment and Path of other packages, bundles, or libraries used as a part of your Spark job. Environment defines whether these dependencies are Local to your machine or Static on the cluster or the web. |

Python

If you select Python as the file Type, the Files tab will contain the following options:

| Option | Description |

| Py File | Specify the main Python file of the Spark job you are submitting. |

| Dependencies | Specify the Environment and Path of other packages, bundles, or libraries used as a part of your Spark job. Environment defines whether these dependencies are Local to your machine or Static on the cluster or the web. |

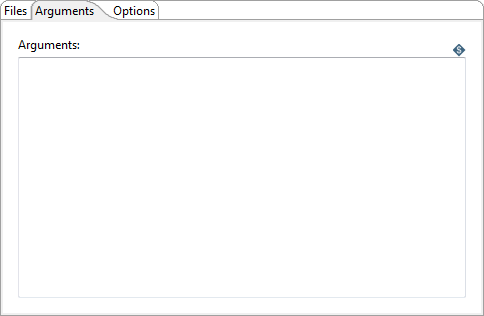

Arguments tab

Enter the following information to pass arguments to the Spark job:

| Option | Description |

| Arguments | Specify and arguments passed to your main Java class, Scala class, or Python Py file through the text box provided. |

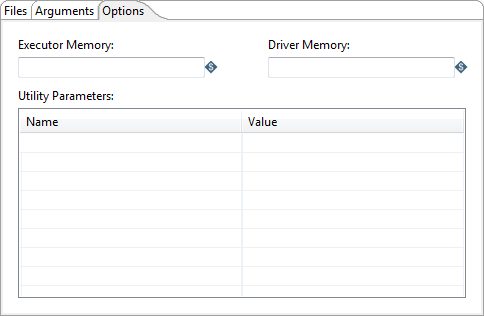

Options tab

Enter the following information to adjust the amount of memory used or define optional parameters:

| Option | Description |

| Executor Memory | Specify the amount of memory to use per executor process. Use the JVM format (for example, 512m or 2g). |

| Driver Memory | Specify the amount of memory to use per driver. Use the JVM format (for example, 512m or 2g). |

| Utility Parameters |

Specify the Name and Value of optional Spark configuration parameters associated with the spark-defaults.conf file. To set up job entries on secure clusters, see Use Kerberos with Spark Submit. |

Troubleshooting your configuration

Errors may occur when you are trying to run a Spark Submit job entry:

- If execution of your Spark application was unsuccessful within PDI, then verify and validate the application by running the Spark-submit command line tool in a Command Prompt or Terminal window on the same machine that is running PDI.

- If you want to view and track the Spark jobs that you have submitted, you can use the YARN ResourceManager Web UI to review the resources that were used, the duration, and access to additional log info.

Running a Spark job from a Windows machine

The following errors may occur when running a Spark Submit job from a Windows machine:

- ERROR yarn.ApplicationMaster: Uncaught exception: org.apache.spark.SparkException: Failed to connect to driver! - JobTracker's log

- Stack trace: ExitCodeException exitCode=10 - Spoon log

To resolve these errors, create a new rule in the Windows firewall settings to enable inbound connections from the cluster.